Introduction: The Hidden Cost of “It Just Works”

When I first migrated this Knowledge Base to Hugo and set up a CI/CD pipeline using GitHub Actions, my primary goal was simplicity. I needed a way to push my Markdown files to GitHub and have a runner automatically compile the static HTML and send it to my Nginx container hosted on a remote VPS.

To achieve this, I used a popular, off-the-shelf SFTP Deployment Action. For the first few days, it was magical. I would commit a new post, and within 2 minutes, the site was live.

“It just works,” I thought. And I moved on to other projects.

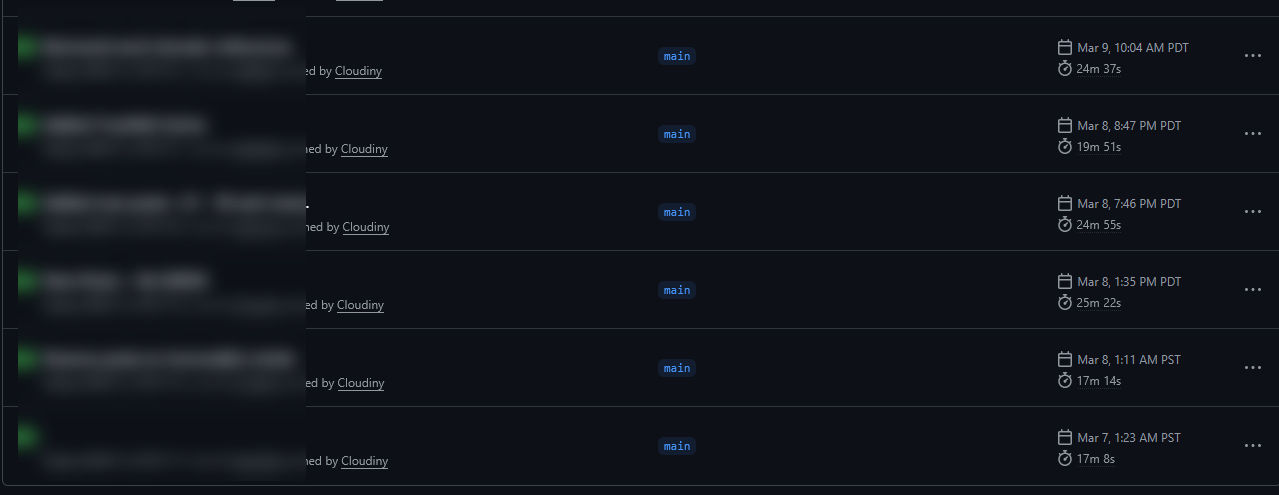

However, as the blog grew—from 10 posts to 30, and then over 50, packed with high-resolution cybersecurity architecture diagrams—a silent, exponential problem was brewing in the deployment logs. My deployment times crept up to 8 minutes, then 16 minutes, and eventually, a single typo correction in an old post took almost 25 minutes to deploy.

As someone operating on a free GitHub Actions tier (which limits you to 2,000 CI/CD minutes per month), this was a bottleneck that needed addressing. In this post, I will explain why SFTP—while great for initial simplicity—can become a liability as your site grows, and how swapping to a differential rsync architecture reduced my deployment times by 98%.

The Problem: SFTP is a “Dumb” Protocol

The root cause of the ballooning deployment time was the SFTP protocol itself. In my original deploy.yml, the pipeline looked like this:

- name: Build Hugo site

run: hugo --source mxlit-site/mxlit-blog --minify

- name: SFTP Deploy

uses: wlixcc/[email protected]

with:

username: $\{{ secrets.FTP_USERNAME }}

# ... (credentials)

local_path: './mxlit-site/mxlit-blog/public/*'

remote_path: '/home/deploy-mxlit/mxlit-site/public'

When you tell an SFTP client to upload a directory containing 500 files, it does exactly that, blindly. It has no concept of “state” or “diffs.” Every time the GitHub Action ran:

- Hugo compiled the site from scratch (taking about 1 second).

- The SFTP Action opened a connection and began uploading

index.html. - Then it uploaded

image1.png. - Then

image2.png. - …and so on, for every single file in the

publicfolder.

Even if I had only changed a single comma in one Markdown file, SFTP was ruthlessly overwriting Megabytes of unchanged images, CSS, and structural HTML on the remote Nginx server. It was a massive waste of bandwidth and compute time.

(The screenshot above shows the moment my deployment time hit 25 minutes using the legacy SFTP method.)

(The screenshot above shows the moment my deployment time hit 25 minutes using the legacy SFTP method.)

The Solution: Differential Syncing with Rsync

To solve this, we must look to a tool designed specifically for mirroring server states: Rsync.

Unlike SFTP, rsync is a “smart” differential protocol. When an rsync client connects to a destination server over SSH, it first asks the server for a manifest of its current files and their modification hashes. It then compares that remote manifest against the local files it wants to upload.

If it detects that 499 out of 500 files are completely identical down to the byte, it simply skips them. It only transmits the 1 file that actually changed over the wire.

Rewriting the GitHub Action

I immediately stripped out the SFTP action from my .github/workflows/deploy.yml and replaced it with easingthemes/ssh-deploy, which utilizes Rsync under the hood.

Here is the optimized deployment step:

- name: Deploy via Rsync to VPS

uses: easingthemes/ssh-deploy@main

env:

SSH_PRIVATE_KEY: $\{{ secrets.FTP_SSH_KEY }}

REMOTE_HOST: $\{{ secrets.FTP_SERVER }}

REMOTE_USER: $\{{ secrets.FTP_USERNAME }}

REMOTE_PORT: $\{{ secrets.FTP_PORT }}

SOURCE: 'mxlit-site/mxlit-blog/public/'

TARGET: '/home/deploy-mxlit/mxlit-site/public/'

# -rltgoDzvO (sync preserving permissions, compressed)

# --delete (purges ghost files on Nginx that no longer exist in Github)

ARGS: "-rltgoDzvO --delete"

Notice the critical ARGS variable, specifically the --delete flag. This flag forces Nginx to exactly mirror the GitHub Actions workspace. If I delete an old image from my repository, Rsync will proactively reach into the Nginx container and delete the orphaned file, preventing the server’s storage from filling up with garbage over time—something SFTP never did.

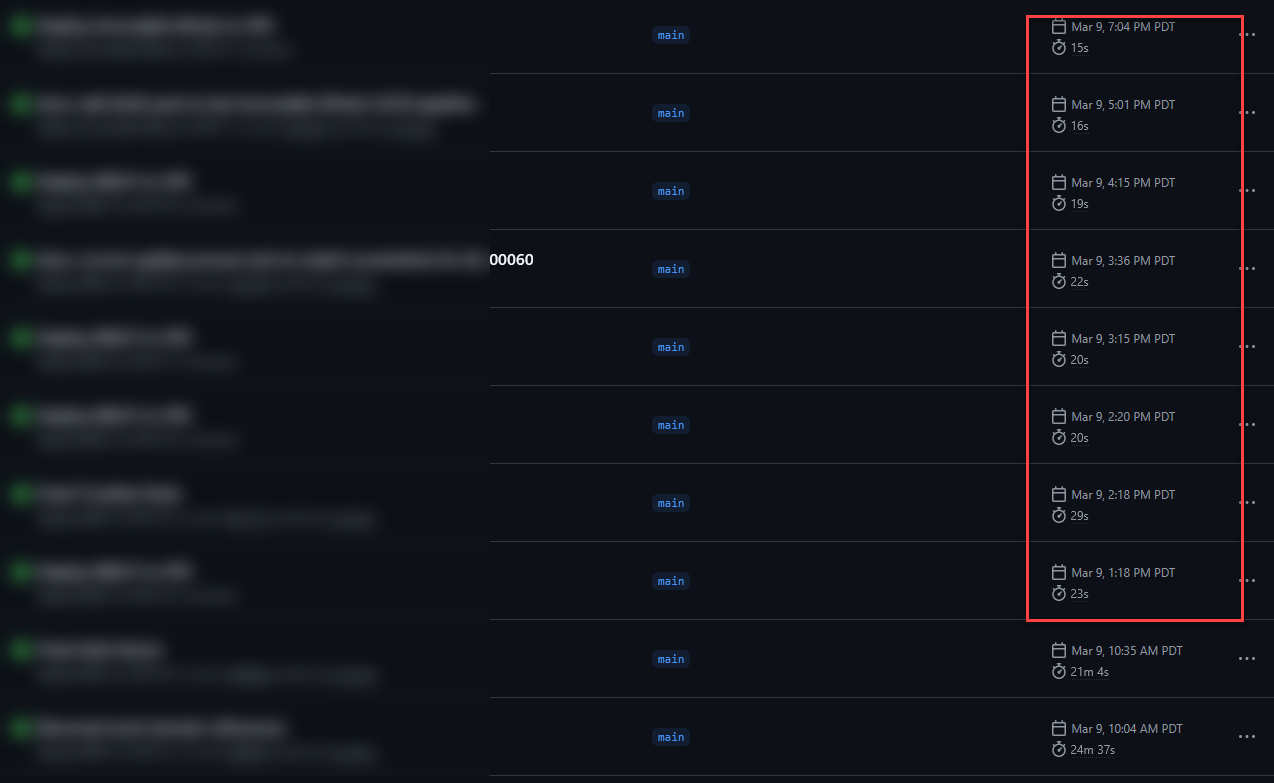

(With Rsync, even with hundreds of files, the deployment now finishes in seconds as only the changes are transmitted.)

(With Rsync, even with hundreds of files, the deployment now finishes in seconds as only the changes are transmitted.)

Adding the Cron Trigger for Scheduled Posts

While I was rewriting the Pipeline, I realized another fatal flaw of deploying static sites manually via push. Hugo allows you to set future date: tags on posts to schedule them. However, Hugo natively skips building files that are “in the future” relative to the server’s clock.

Because Nginx is just a dumb web server serving whatever HTML GitHub Actions hands to it, any post scheduled for 11:00 AM would never go live if my last GitHub push was at 9:00 AM. The HTML simply wasn’t generated.

To fix this, I added a schedule trigger to the top of the deploy.yml:

on:

push:

branches:

- main

paths:

- 'mxlit-site/mxlit-blog/**'

schedule:

- cron: '0 * * * *'

Now, GitHub Actions wakes up automatically at the top of every hour (UTC), runs hugo, realizes that certain posts have now crossed the time threshold, and uses Rsync to instantly push those new 2 or 3 HTML files to the VPS without me lifting a finger.

Conclusion

It takes a bit of technical maturity to look at a 25-minute deployment log and realize that your initial architecture choices, while perfect for getting started, need to evolve. The “If it ain’t broke, don’t fix it” mentality has its limits in Systems Engineering. SFTP wasn’t “broken”; it was just no longer the right tool for a growing Knowledge Base.

By pivoting to Rsync, not only did deployment times plummet from ~25 minutes to mere seconds, but the pipeline gained proper state mirroring (via --delete) and true post scheduling automation. Optimization is a continuous loop, and sometimes, the best lessons come from staring at a red timeout error on a free tier limits page.